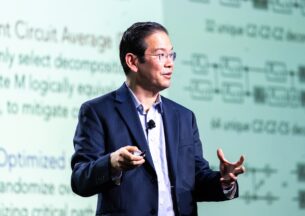

Ken Perlin (NYU) - Experiments in Future Reality

Extended reality (XR) eyewear and next-generation wireless connectivity will soon enable people to communicate with each other through words and gestures as though they are physically together, wherever they may be located. Meanwhile, generative AI will help us to harness those words and gestures to solve problems in many fields, including science, engineering, architecture, physical therapy, medicine, and education.

There are exciting research challenges ahead in understanding how XR, AI, spatial audio and tactile interfaces can lead to a new paradigm – Experimental Computing – that seamlessly integrates rich digitally mediated shared experiences into our life and work. In this talk, I will describe some of the challenges and opportunities in achieving the full potential of Experimental Computing, and how we can use tools of today to better understand the possibilities of tomorrow.

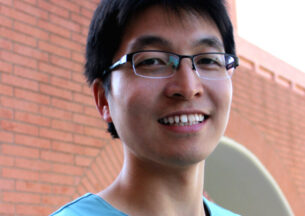

Speakers

Ken Perlin

Ken Perlin is a Professor of Computer Science at NYU and is a distinguished researcher in the domains of XR(VR+AR), Graphics, and Animation. He is also known for winning an Oscar with his namesake Perlin Noise, a type of gradient noise used by visual effects artists to increase the appearance of realism in computer graphics.