Kristina Toutanova (Google) - Advances and Limitations in Generalization via Self-Supervised Pretrained Representations

Advances and Limitations in Generalization via Self-Supervised Pretrained Representations

Pretrained neural representations, learned from unlabeled text, have recently led to substantial improvements across many natural language problems. Yet some components of these models are still brittle and heuristic, and sizable human labeled data is typically needed to obtain competitive performance on end tasks.

I will first talk about recent advances from our team leading to (i) improved multi-lingual generalization and ease of use through tokenization-free pretrained representations and (ii) better few-shot generalization for underrepresented task categories via neural language model-based example extrapolation. I will then point to limitations of generic pre-trained representations when tasked with handling both language variation and out-of-distribution compositional generalization, and the relative performance of induced symbolic representations.

This talk is part of the TTIC Colloquium and will be presented on Zoom, register here for details.

Host: Toyota Technology Institute at Chicago

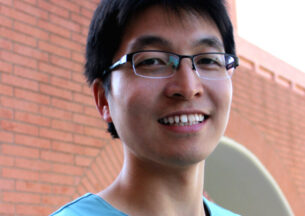

Kristina Toutanova

Kristina Toutanova is a research scientist at Google Research in Seattle and an affiliate faculty at the University of Washington. She obtained her Ph.D. from the Computer Science Department at Stanford University with Christopher Manning, and her MSc in Computer Science from Sofia University, Bulgaria. Prior to joining Google in 2017, she was a researcher at Microsoft Research, Redmond. Kristina focuses on modeling the structure of natural language using machine learning, most recently in the areas of representation learning, question answering, information retrieval and semantic parsing. Kristina is a past co-editor in chief of TACL, a program co-chair for ACL 2014, and a general chair for NAACL 2021.