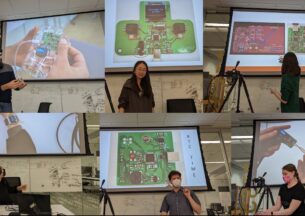

Yu She (MIT) - Integrating Flexibility and Rigidity: Design and Perception for Safe and Performant Robots

Integrating Flexibility and Rigidity: Design and Perception for Safe and Performant Robots

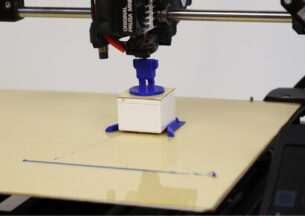

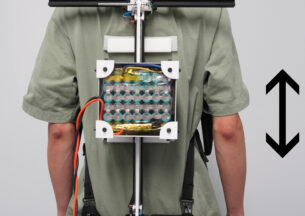

Conventional rigid robots provide high performance (e.g., high accuracy/speed) but can be dangerous for humans nearby, while recent soft robots offer inherent safety but may suffer from limited performance in some cases. Is there any way that we can leverage the advantages from both rigid and soft robots and create a new type of robot? To perform a complex robotic manipulation task, a good physical platform is not enough. We need a good perception system as well to better understand the state of the machine itself and the state of its interacted objects. Can we build a perception system that provides such rich information for feedback in real time, especially when the physical platform or the interacted objects includes flexible components with complex states? In this talk, I will present some of my recent work in addressing those questions. First, I will talk about the design of a shape morphing arm with variable stiffness for safe physical human-robot interaction. Second, I will discuss the design and perception of an exoskeleton-covered soft robotic gripper that employs embedded vision sensors providing high-resolution proprioception and exteroception simultaneously. Last, I will describe the development of a tactile-reactive gripper with compliant joints that can perform cable manipulation tasks by leveraging vision-based tactile sensors (GelSight).

Host: Pedro Lopes

Yu She

Yu She is a postdoctoral researcher at MIT Computer Science & Artificial Intelligence Laboratory (CSAIL) working with Prof. Edward Adelson and Prof. Alberto Rodriguez. He received his PhD in Mechanical Engineering at Ohio State University in 2018 under the supervision of Prof. Hai-Jun Su. His research interests include design, perception, and control of intelligent robotic systems with an emphasis on human-safe collaborative robots, constrained soft robots, and robotic manipulation. He received the Presidential Fellowship at the Ohio State University and he is a recipient of the 2018 DSCC Best Paper.