Three EPiQC Papers Chosen By IEEE Micro for Annual Top Picks Awards

Three UChicago CS papers advancing the field of quantum computing were recognized by the prestigious IEEE Micro Top Picks roundup of the best research from 2020 computer architecture conferences. The studies describe new methods for overcoming challenges in error correction and size constraints for the next wave of quantum computers, shortening the gap to practical and innovative uses of this exciting new technology.

A paper led by graduate students Casey Duckering and Jonathan Baker received the Top Pick designation from the journal, while two papers led by graduating PhD student Yongshan Ding were given honorable mention. All three studies come from the research group of Seymour Goodman Professor of Computer Science Fred Chong, the director of the NSF-funded Enabling Practical-scale Quantum Computation (EPiQC) expedition.

Together, the three papers develop new approaches in hardware and software to make quantum computers less error-prone and more efficient, critical steps to tap into the potential of these architectures for studying physics, chemistry, cryptography and other applications. From “2.5-dimensional” qubit memory to improving “garbage collection” in quantum systems with uncomputation, to reducing errors through frequency tuning; these creative ideas hold great promise in the rapidly growing field.

“Our latest works are great examples of how quantum software and architecture can adapt to the physics of quantum devices to create systems that are significantly more reliable in running quantum algorithms,” Chong said.

A New (Half) Dimension for Quantum Memory

One of the most striking aesthetic features of today’s quantum computers is the intricate web of wires that dart in and out of the chip and its surrounding dilution refrigerator, which produces the extreme cold temperatures necessary for their operation. The complex wiring is a result of each qubit — the quantum analogue of the computing bit — requiring its own dedicated control wires in the most common superconducting qubit architectures. But while existing machines top out around 50 qubits, scientists imagine that quantum computers will eventually reach thousands or millions of qubits, making such complicated wiring infeasible.

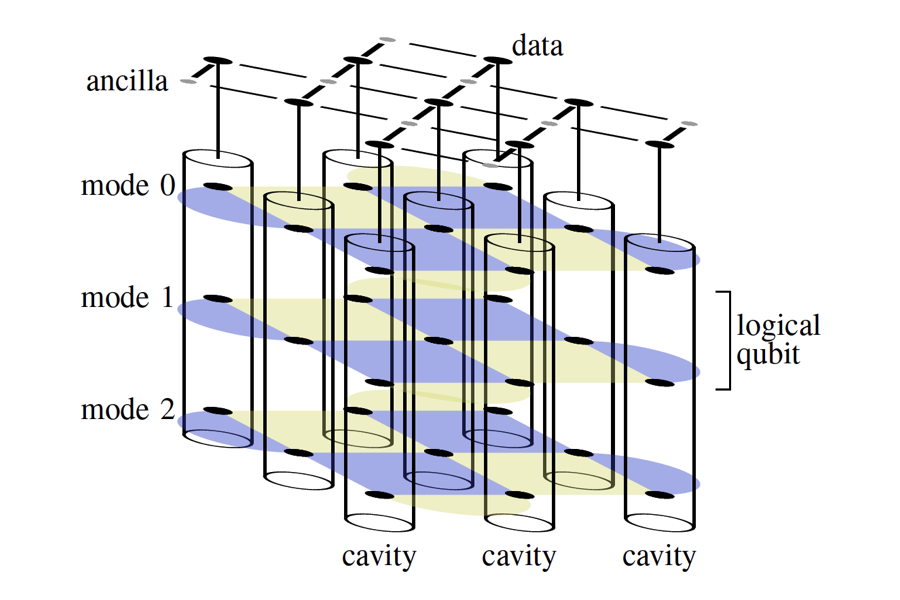

By simulating the placement of qubits in a different type of structure, a resonant cavity, Duckering and Baker found that they could remedy this overcrowding problem tenfold while preserving the performance of future “fault-tolerant” quantum computers. The technology, developed by UChicago Associate Professor of Physics David Schuster, stores computational qubits alongside 10 or more memory qubits within each cavity, virtualizing logical qubits.

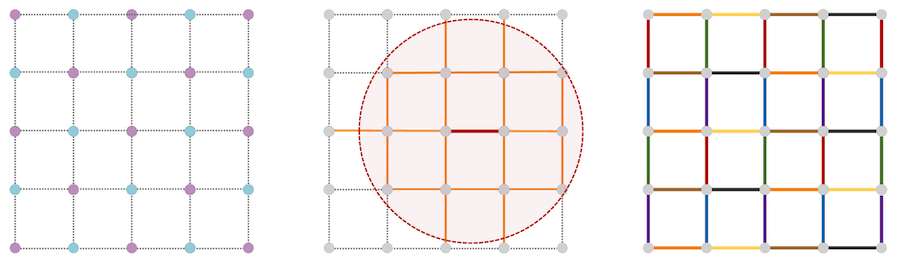

Their concept replaces the current standard two-dimensional array of qubits with a 2.5-dimensional configuration — they named after video games where characters move in 2D against a background with limited 3D depth.

“With this arrangement, you can make the grid of qubits as big as you need, subject to the constraints of how many wires you can plug into the computer, while the cavity size is limited,” Duckering said. “Our simulation found that if you have this kind of architecture, you can perform error correction with a 10x reduction in the control electronics requirements and the number of control wires you need going into the dilution refrigerator.”

The resonant cavity concept is somewhat similar to the in-memory computing approach used in highly-parallel computing, where information is stored locally near processing nodes rather than on separate disk drives. But the authors are not aware of it being used by any of the major figures in the quantum computing race, and the Top Picks award may help spread the word about its potential.

“The job of a computer architect is to demonstrate the viability of new technology,” Baker said. “One of the central points of this paper is that with this fairly new technology, resonant cavity for memory, we're demonstrating the value beyond just near-term advantage. You could use this for fault-tolerant computing to get improvements in space and time.”

Hushing Crosstalk and Rethinking Garbage Collection

While reimagining the hardware can unlock potential in tomorrow’s quantum computers, software can also play a role in improving their performance. The two honorable mention papers led by Ding explore this avenue, developing software solutions for blocking interference between qubits and efficiently recycling qubits — two strategies can help users get the most from the limited scale of current and near-future quantum machines.

“Crosstalk” is one of the most common issues with today’s superconducting quantum computers, occurring when unwanted interference happens between neighboring qubits, introducing errors during the running of a program. Often this crosstalk occurs when two qubits share resonant frequencies; not unlike when a radio receives competing signals from two stations using the same broadcast channel.

While hardware solutions have been used by manufacturers for this issue, Ding’s paper with Pranav Gokhale, Sophia Fuhui Lin, Richard Rines, Thomas Propson, and Chong puts the software compiler in charge of assigning frequencies so that this crosstalk doesn’t occur. The approach systematically tunes qubit frequencies according to input programs, minimizing the chance of errors and improving program success rate by more than 13 times. Ding’s research found that it could be immediately useful for some of the systems under development by the quantum computing industry.

“This work proves that a software solution, in this case, works surprisingly well,” Ding said. “Our compiler shows significant improvement in average gate fidelity, compared to IBM’s fixed frequency systems, and we allow the tunable qubits fixed coupler design, like Rigettii’s, to achieve similar fidelity of a more complex design with tunable couplers, such as Google’s systems. It is a great example where software design can greatly simplify hardware complexity required for scaling up quantum systems.”

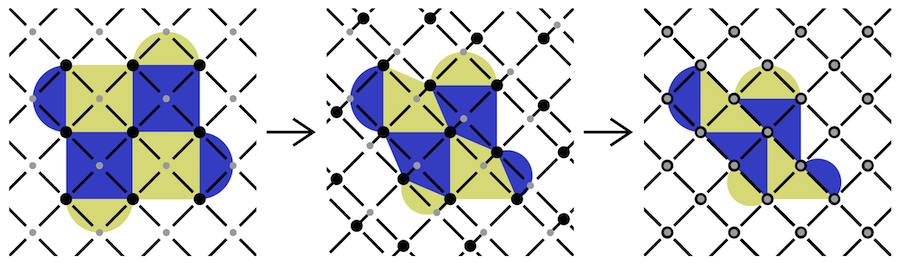

A second paper, authored by Ding with Xin-Chuan Wu, Adam Holmes, Ash Wiseth, Diana Franklin, Margaret Martonosi, and Chong, updates the classical computing principle of “garbage collection” for the quantum world. In systems with limited memory, garbage collection algorithms find saved information that is no longer needed for a program to run and dumps it, freeing up resources for future steps. Just as this principle was important for the early days of computing in the mid-20th century, it’s critical for today’s quantum computer operating with only dozens of qubits.

Ding and his colleagues propose SQUARE (Strategic QUantum Ancilla REuse), the first system-level quantum memory management technique. The approach uses “uncomputation,” recycling a used qubit so that it can be redeployed elsewhere. As this process involves high operational costs, a user must find the right balance between performing uncomputation too frequently or too seldomly. As with the crosstalk strategy, the researchers optimized this decision-making by assigning it to the compiler, evaluating the best places in a program to perform this recycling task, even for the current generation of noisy intermediate-scale computers.

“We observe that garbage collection of scratch qubits is, surprisingly, often cheaper than moving new scratch qubits across a quantum chip,” Ding said. “This changes the way quantum systems allocate and manage qubits, greatly extending their practical use for a given machine size.”

IEEE Micro published its annual “Top Picks from the Computer Architecture Conferences” in its May/June 2021 issue. The awards recognize “significant and insightful papers that have the potential to influence the work of computer architects for years to come.” Chong’s group and EPiQC previously received two Top Picks honors in 2020, while a paper from the group of UChicago CS associate professor Hank Hoffmann received an honorable mention in 2019.