Rina Foygel Barber (UChicago) - Predictive inference with the jackknife+

Predictive inference with the jacknife+

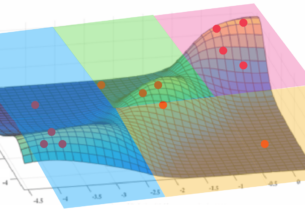

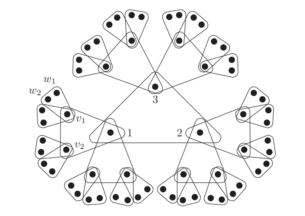

We introduce the jackknife+, a novel method for constructing predictive confidence intervals that is robust to the distribution of the data. The jackknife+ modifies the well-known jackknife (leave-one-out cross-validation) to account for the variability in the fitted regression function when we subsample the training data. Assuming exchangeable training samples, we prove that the jackknife+ permits rigorous coverage guarantees regardless of the distribution of the data points, for any algorithm that treats the training points symmetrically. Such guarantees are not possible for the original jackknife and we demonstrate examples where the coverage rate may actually vanish. Our theoretical and empirical analysis reveals that the jackknife and jackknife+ intervals achieve nearly exact coverage and have similar lengths whenever the fitting algorithm obeys some form of stability. We also extend to the setting of K-fold cross-validation. Our methods are related to cross-conformal prediction proposed by Vovk [2015] and we discuss connections. This work is joint with Emmanuel Candes, Aaditya Ramdas, and Ryan Tibshirani.

Host: Eric Jonas

Rina Foygel Barber

Rina Foygel Barber is an Associate Professor in the Department of Statistics at the University of Chicago. Before starting at U of C, she was a NSF postdoctoral fellow during 2012-13 in the Department of Statistics at Stanford University, supervised by Emmanuel Candès. She received her PhD in Statistics at the University of Chicago in 2012, advised by Mathias Drton and Nati Srebro, and a MS in Mathematics at the University of Chicago in 2009. Prior to graduate school, she was a mathematics teacher at the Park School of Baltimore from 2005 to 2007.