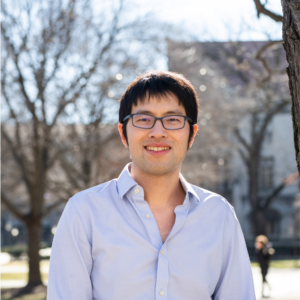

Chenhao Tan is an associate professor of computer science at the University of Chicago. He obtained his PhD degree in the Department of Computer Science at Cornell University and bachelor’s degrees in computer science and in economics from Tsinghua University. Prior to joining UChicago, he spent a year at University of Washington as a postdoc and three years at CU Boulder as an assistant professor. His research interests include human-centered machine learning, natural language processing, and computational social science. He has published papers primarily at ACL and WWW, and also at KDD, WSDM, ICWSM, etc. His work has been covered by many news media outlets, such as the New York Times and the Washington Post. He also won an NSF CAREER award, an NSF CRII award, a Salesforce research award, an Amazon research award, a Facebook fellowship, and a Yahoo! Key Scientific Challenges award.

Research

Focus Areas: Computational Social Science, Human-centered AI, Natural Language Processing

My research brings together social sciences and machine learning to develop the best AI for humans. Specifically, my work aims to enable effective human-AI interaction by

Understanding human decision making through language. We analyze large amounts of textual data to unfold the connection between language and decisions in two directions. In one direction, we leverage natural experiments to understand how language shapes human decisions (e.g., what makes effective persuasion and language of bargaining). In the other direction, we examine explanations of human decisions to identify their biases.

Generating human-centered explanations. Our work shows that current methods of generating explanations for AI predictions fail to improve human-AI decision making. We develop a novel theoretical framework to show that the missing link is to model human interpretation of AI explanations. We thus build algorithms to align AI explanations with human intuitions and demonstrate substantial improvements in human performance.

Developing novel paradigms of human-AI interaction. We explore additional possibilities that humans and AI can complement each other in three directions: 1) appropriate and effective delegation to AI; 2) decision-focused summarization, a novel formulation of the classic NLP task to identify the most relevant information to support decision making; and 3) few-shot learning from human explanations so that humans can effectively improve large language models (LLMs).