Google Award to Prof. Andrew Chien Will Explore Regional Optimization for Greener Cloud

The internet’s power bill is big, and only getting bigger. Today, the huge data centers run by companies such as Google, Facebook, and Amazon use roughly 2 percent of the world’s electricity, and some studies project an increase to as high as 8 percent over the next decade. Recognizing the environmental impact, the biggest tech companies have launched campaigns to substitute carbon-free energy; in 2017, Google announced that they had reached their goal of purchasing enough renewable energy to offset all of their operations.

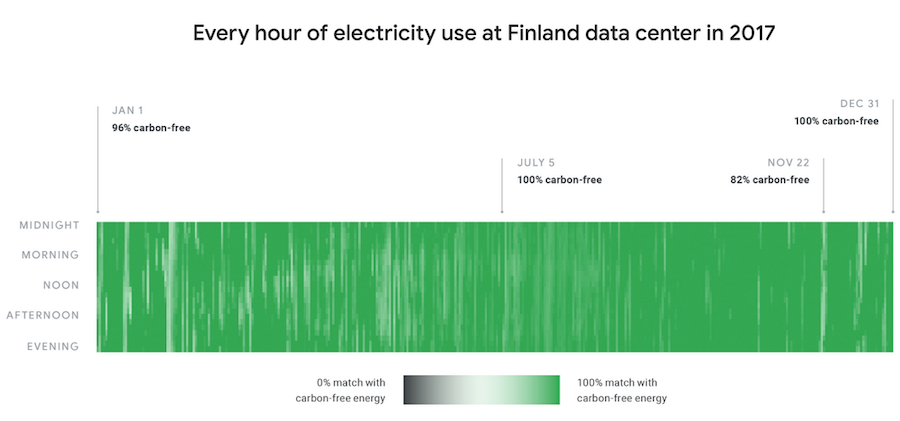

Recently, Google set an even higher standard for itself: not just offsetting its energy use on a global and annual basis, but moving towards round-the-clock “hourly matching” of green energy at their data centers. Instead of buying wind or solar power when there is a surplus of these renewables in order to cancel out their carbon footprint, Google wants to make sure it is buying enough carbon-free energy to offset their activity 24 hours a day, 7 days a week.

Some centers, such as a location in Finland, already achieve this “hourly offset” 97 percent of the time. Others, such as a data center in Taiwan, perform much more poorly. Meeting this goal will require not only hourly matching, but also regional matching — using clean energy generated near data centers, rather than in another part of the world.

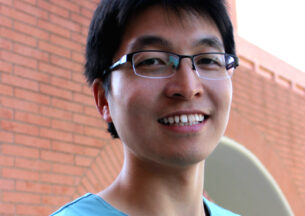

To help solve this problem, the company has given a Google Research Award to a team led by Andrew A Chien, William Eckhardt Distinguished Service Professor of Computer Science at the University of Chicago. The project, “Optimizing Power Cost and Carbon Footprint for a Distributed Collection of Data Centers,” dovetails with Chien’s Zero-Carbon Cloud project, which seeks to exploit phenomena in modern power grid markets such as “stranded power” to create carbon-free data centers.

The Google project taps ZCCloud’s larger grid and regional focus to pursue regional matching. As Chien discusses in the video below, the new Google method of defining “carbon neutrality” is in line with how ZCCloud has been thinking about it for the last few years.

“This is pretty exciting,” Chien said. “It’s the first step in a major transformation and ultimately I expect to see Google move to co-optimize in a fashion similar to Zero-Carbon Cloud.”

[Watch a recent lecture by Andrew Chien at Rice University on the Zero-Carbon Cloud]

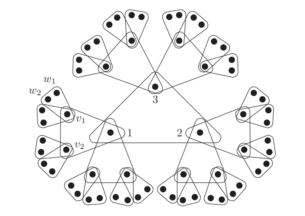

This new branch of the ZCCloud project will examine how to exploit the distributed nature of industry data centers to meet environmental goals. Google operates hundreds of data centers around the globe, and the price and source of electricity can vary widely between these locations. Because some of the computer processing work of data centers can — at least in theory — be performed at any of these locations, the ability to shift workloads towards wherever electricity is greenest and cheapest at any given moment could provide financial and environmental benefits.

Working with a realistic cloud operations and data center perspective provided by Google, combined with power market and grid data from electric power system operators CAISO, MISO, and ERCOT, Chien and colleagues will simulate the carbon footprint and power cost benefits of a variety of approaches for the cloud and grid of the future — radically more more data centers and increased wind and solar energy.

They will also build and test better algorithms for how to best take advantage of workload flexibility, exploiting a variety of external factors such as weather and day of the week. Importantly, the research will also look at how this coupling of data centers and power grids would affect other energy customers, and whether it would incentivize increased usage of renewable electricity sources in general, not just among Big Tech.

“Demonstrating these bottom-line feasibility and Earth-friendly benefits will not only help Google realize their carbon-free aspirations, but encourage other cloud companies to adopt similar strategies,” Chien said. “And ultimately, we believe that these dynamic data center management techniques will have broad impact on both the software stacks and physical engineering of data centers.”