UChicago CS Researchers Create New Protection Against Facial Recognition

The rapid rise of facial recognition systems has placed the technology into many facets of our daily lives, whether we know it or not. What might seem innocuous when Facebook identifies a friend in an uploaded photo grows more ominous in enterprises such as Clearview AI, a private company that trained its facial recognition system on billions of images scraped without consent from social media and the internet. Thus far, people have had few protections against this use of their images, apart from not sharing photos publicly at all.

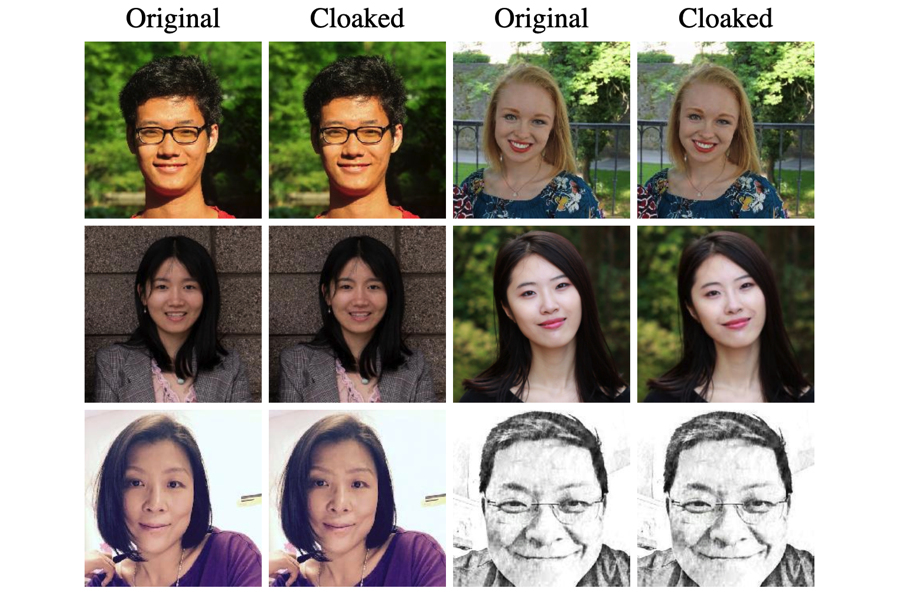

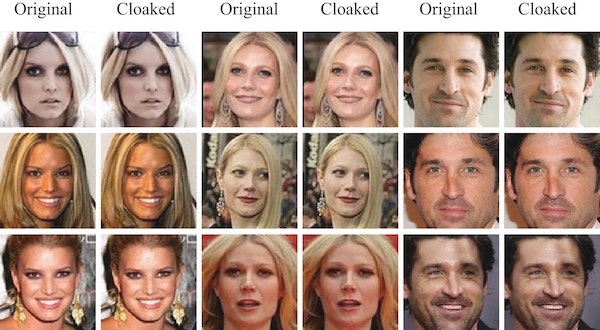

A new research project from the University of Chicago Department of Computer Science provides a powerful new protection mechanism. Named Fawkes, the software tool “cloaks” photos to trick the deep learning computer models that power facial recognition, without noticeable changes visible to the human eye. With enough cloaked photos in circulation, a computer observer will be unable to identify a person from even an unaltered image, protecting individual privacy from unauthorized and malicious intrusions. The tool targets unauthorized use of personal images, and has no effect on models built using legitimately obtained images,such as those used by law enforcement.

“It's about giving individuals agency,” said Emily Wenger, a third-year PhD student and co-leader of the project with first-year PhD student Shawn Shan. “We're not under any delusions that this will solve all privacy violations, and there are probably both technical and legal solutions to help push back on the abuse of this technology. But the purpose of Fawkes is to provide individuals with some power to fight back themselves, because right now, nothing like that exists.”

In a paper that will be presented at the USENIX Security symposium this month, the researchers found that the method was nearly 100 percent effective at blocking recognition by state-of-the-art models from Amazon, Microsoft, and other companies. While it can’t disrupt existing models already trained on unaltered images downloaded from the internet, publishing cloaked images can eventually erase a person’s online “footprint,” the authors said, rendering future models incapable of recognizing that individual.

“In many cases, we do not control all the images of ourselves online; some could be posted from a public source or posted by our friends,” Shan said. “In this scenario, Fawkes remains successful when the number of cloaked images outnumber that of uncloaked images. So for users who already have a lot of images online, one way to improve their protection is to release even more images of themselves, all cloaked, to balance out the ratio.”

The technique builds off the fact that machines “see” images differently than humans. To a machine learning model, images are simply numbers representing each pixel, which systems known as neural networks mathematically organize into features that they use to distinguish between objects or individuals. When fed with enough different photos of a person, these models can use these unique features to identify the person in new photos, a technique used for security systems, smartphones, and — increasingly — law enforcement, advertising, and other controversial applications.

With Fawkes — named for the Guy Fawkes mask used by revolutionaries in the graphic novel V for Vendetta — Wenger and Shan with collaborators Jiayun Zhang, Huiying Li, and UChicago Professors Ben Zhao and Heather Zheng exploit this difference between human and computer perception to protect privacy. By changing a small percentage of the pixels to dramatically alter how the person is perceived by the computer’s “eye,” the approach taints the facial recognition model, such that it labels real photos of the user with someone else’s identity. But for a human observer, the image appears unchanged.

In early august, Fawkes was featured in the New York Times. However, the researchers clarified a few points from the piece. As of August 3rd, the tool had accumulated nearly 100,000 downloads, and the team had updated the software to prevent the significant distortions described by the article, which were in part due to some outlier samples in a public dataset.

Zhao also responded to Clearview CEO Hoan Ton-That’s assertion that it was too late for such a technology to be effective given the billions of images the company already gathered, and that the company could use Fawkes to improve its model’s ability to decipher altered images.

“Fawkes is based on a poisoning attack. What the Clearview CEO suggested is akin to adversarial training, which does not work against a poisoning attack. Training his model on cloaked images will corrupt the model, because his model will not know which photos are cloaked for any single user, much less the hundreds of millions they are targeting,” Zhao said. “As for the billions of images already online, these photos are spread across many millions of users. Other people’s pics do not affect the efficacy of your cloak, so the total number of pictures is irrelevant. Over time, your cloaked images will outnumber older images and cloaking will have its intended effect.”

To use Fawkes, users simply apply the cloaking software to photos before posting them to a public site. Currently, the tool is free and available on the project website for users familiar with using the command line interface on their computer. The team has also made it available as software for Mac and PC operating systems, and hopes that photo-sharing or social media platforms might offer it as an option to their users.

“It basically resets the bar for mass surveillance back to the pre-deep learning facial recognition model days. It evens the playing field just a little bit, to prevent resource-rich companies like Clearview from really disrupting things,” said Zhao, Neubauer Professor of Computer Science and an expert on machine learning security. “If this becomes integrated into the broader social media or internet ecosystem, it could really be an effective tool to start to push back against these kinds of intrusive algorithms.”

Given the large market for facial recognition software, the team expects that model developers will try to adapt to the cloaking protections provided by Fawkes. But in the long run, the strategy offers promise as a technical hurdle to make facial recognition more difficult and expensive for companies to effectively execute without user consent, putting the choice to participate back in the hands of the public.

“I think there could be short term countermeasures, where people come up with little things to break this approach,” said Zhao. “But in the long run, I believe image-modification tools like Fawkes will continue to have a significant role in protecting us from increasingly powerful machine learning systems.”